DigiGlo

Nov 2020Our paper, "DigiGlo: Exploring the Palm as an Input and Display Mechanism through Digital Gloves", is part of the Proceedings of the Annual Symposium on Computer-Human Interaction in Play (CHI PLAY ’20).

Abstract

The shape and function of the human hand are intimately linked to our interaction with the physical world and sets us apart from our evolutionary ancestors. In the digital age, our hands still represent our main form of interaction. We use our hands to operate keyboards, mice, trackpads, and game controllers.

This modality, however, separates content display and interaction.

In our paper, we present DigiGlo (Digital Gloves), a system designed to evaluate the benefits of a unified hand display and interaction system. We explore this symbiosis in the context of gaming where users control games using hand gestures while the content is displayed on their bare hand. Building on established learning principles, we explore different hand gestures and other specially tailored interactions, through three carefully designed activities and two user studies. From these we show that this is an idea that has the potential to bring more intuitive, enjoyable, and effective gaming and learning experiences, and offer recommendations regarding how to better design such systems.

CHI PLAY video

Talk Script

As human beings, our hands play an important role in how we interact with the world. We use hands to communicate, we use hands to count, we use hands to create. When it comes to the digital world, hands still play an essential role as we use them to operate mice, keyboards, and other controllers.

Hand gestures and movements have been explored for a long time as they show potential for more direct and natural interaction. More recently, new technology has brought the power of hand-based interaction to the digital field. Using sensors such as Leap Motion offers undeniable advantages for gaming, creation and navigation. Importantly, research shows that hand gestures that have a meaning in the physical world should be picked over more abstract gestures.

At the same time, the physical world has been explored as a support for digital content. For example, making use of virtual reality and projection mapping, Roo and colleagues showed how a simple sandbox can support meditation and search for mindfulness. The body has also been used as a display to support creativity and play, as in this digital makeup application. However, to our knowledge, using the hand itself as a display has not yet been explored.

Ultimately, placing the body at the core of digital experiences is strongly recommended in embodiment literature. Reconnecting with the body is helpful for learning, for example in mathematics, as using hand gestures can help students perform better proofs. But such an approach can also help people experience their body as play and benefit from somaesthetic experiences.

We present DigiGlo, a system where the hand is used both as a display and a controller. In our paper, we implemented a Virtual Reality version of the system, but our mechanism has also great value in Spatial Augmented Reality as it would not require to equip the person with any hardware. Relying strongly on embodiment, our system brings the body back at the center of the digital activity. Moreover, by co-locating input and feedback, our system reduces split-attention effects.

To test and evaluate our system we implemented 3 playful activities. Space Traveller is a self-contained pinball game making use of the shape of the hand and its strong connection with both display and controls. On top of the main pinball mechanics, the hand controller brings in an interesting twist as it lets the player bend space to their desire and influence gravity.

Marble Runner explores natural hand interaction specific to DigiGlo. In this fast-paced game, the player’s dexterity is put to the test as they go along a twisted path filled with obstacles. Exploiting a viewport metaphor, the player can control the marble by simply moving their hand around.

Finally, our educational activity, Noelle’s Ark, focuses on embodiment for learning. In this activity, the player has to compare the weight of two items by moving their hands up and down, mimicking a twin pan balance. Involving the body in such an activity not only improves the interaction, but also supports learning and facilitates retention of information.

We performed two user studies to evaluate our system: one preliminary usability study focusing on quantitative aspects followed by a more focused qualitative thematic analysis. For the latter, we interviewed five participants, and asked them various questions about their experience, focusing on interaction, embodiment, and potential for gaming and learning. We aggregated our findings as design recommendations and I’ll present some of them now.

Confirming previous research, participants enjoyed gestures that have a strong physical connection with their effects as they found them easier to remember. Participants mentioned that simpler gestures are more intuitive. They also said that they enjoyed such gestures as they find them natural and easy to use. As a result, we recommend to select simple and meaningful hand gestures, both from a usability perspective and a learning perspective. But what is interesting with our system is that because of the close mapping between control and display, some new gestures become meaningful, such as a pinch of the fingers to bend space.

We also noticed that participants had much stronger expectations with respect to physics. In particular, they expected the physics of the digital world to behave exactly as it would in the physical world, even if it resulted in more cumbersome and impractical interaction. For example, in Space Traveller, participants said that they wished gravity would listen to them more. Similarly, in Noelle’s Ark, the participants used their hands perfectly horizontally, even though it reduced readability. This is a form of tactile illusion specific to our system and we recommend to be mindful of participants' expectations from the physical world when connecting their body so strongly to the digital world.

Overall, we show that DigiGlo offers novel and exciting experiences both for gaming and learning. But participants also suggested several other use cases and applications. In particular, DigiGlo could also be explored as a mechanism to foster creativity, body awareness and mindfulness.

Future Work Gallery

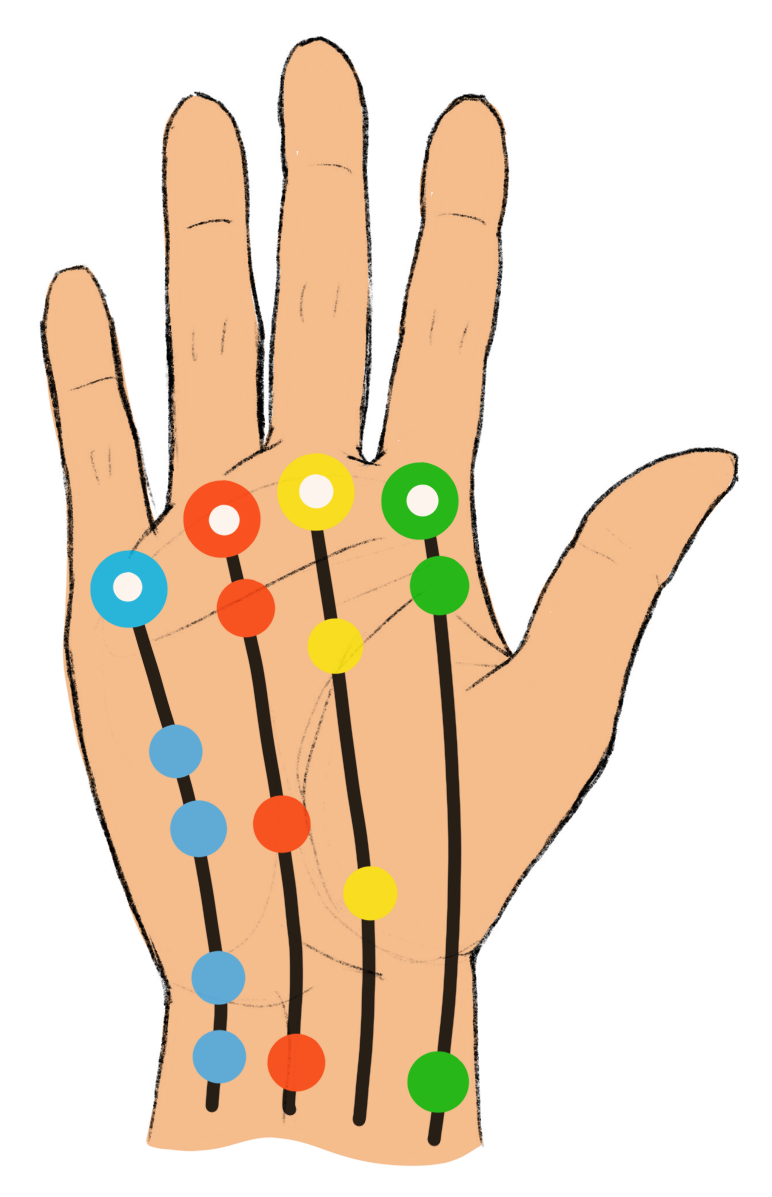

An activity inspired by Guitar Hero can help people practice their hand dexterity for playing the guitar.

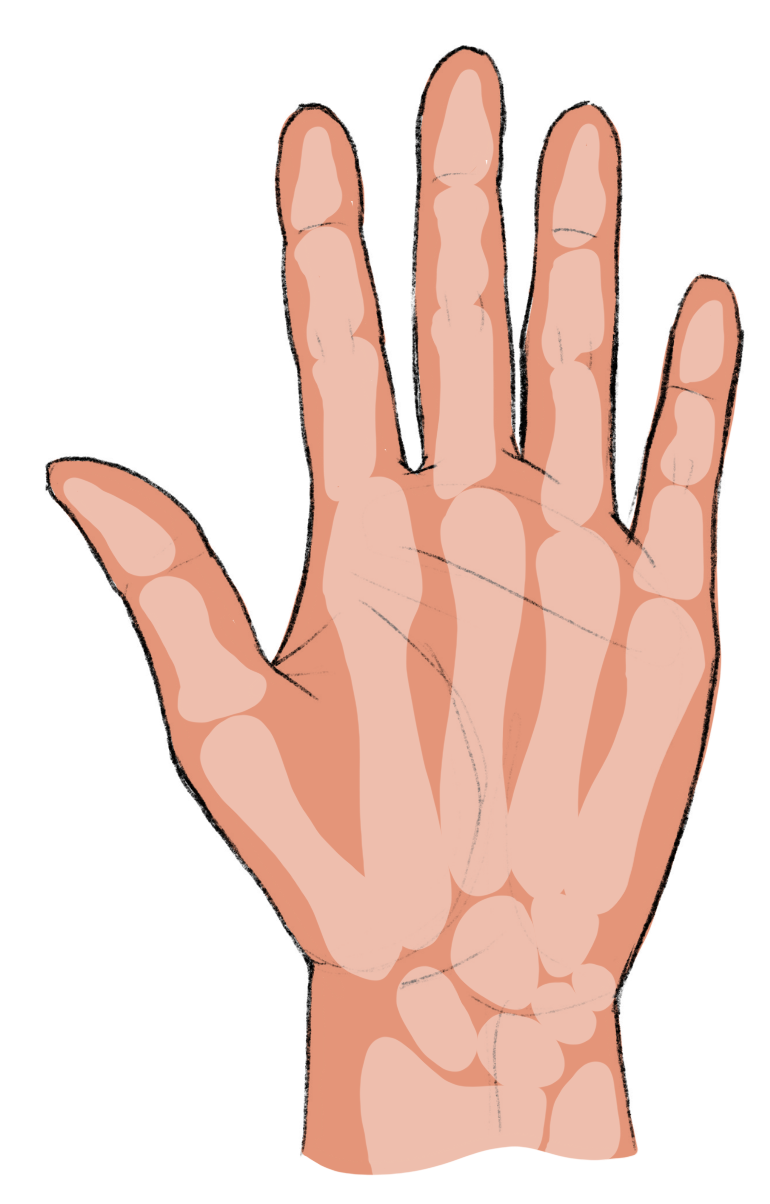

DigiGlo can also be used to learn about anatomy of the hand.

Exploiting the other side of the hand, DigiGlo can support nail polish previews.

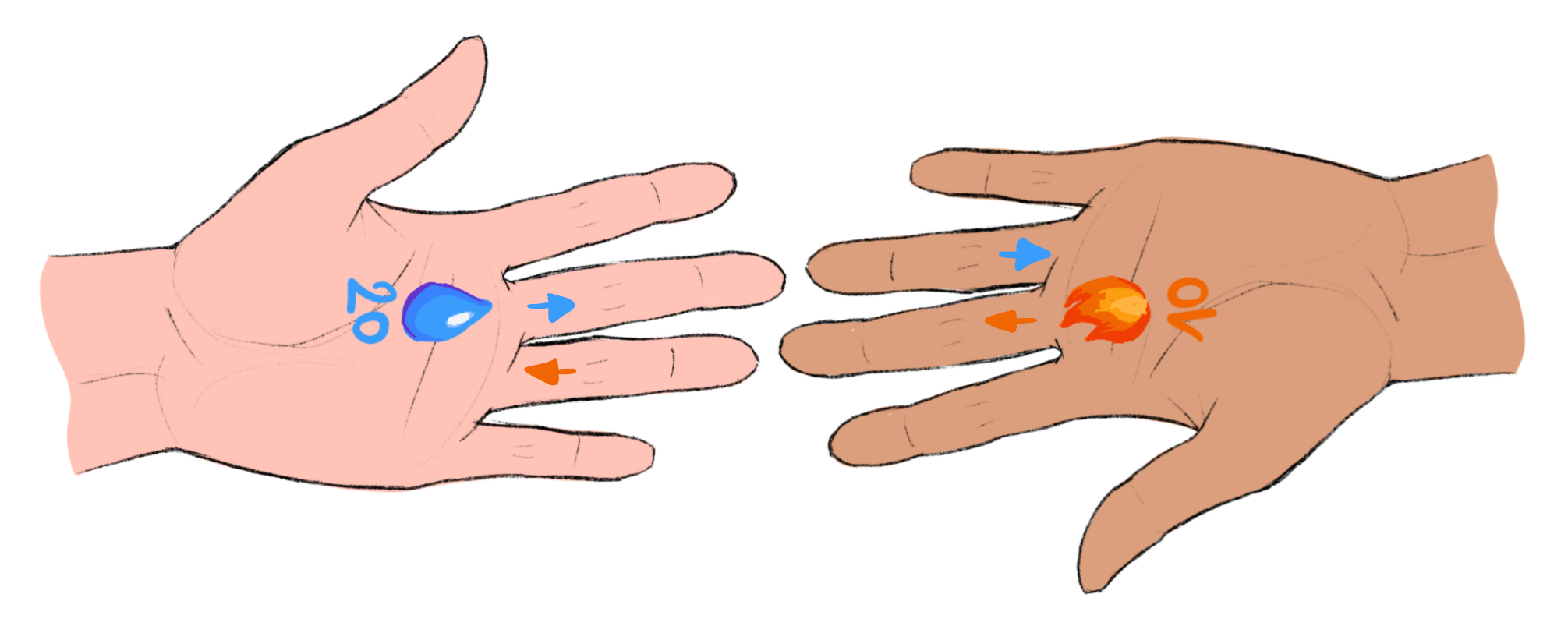

DigiGlo can also be imagined as a support for multiplayer games, as presented in this handshake exchange of resources.

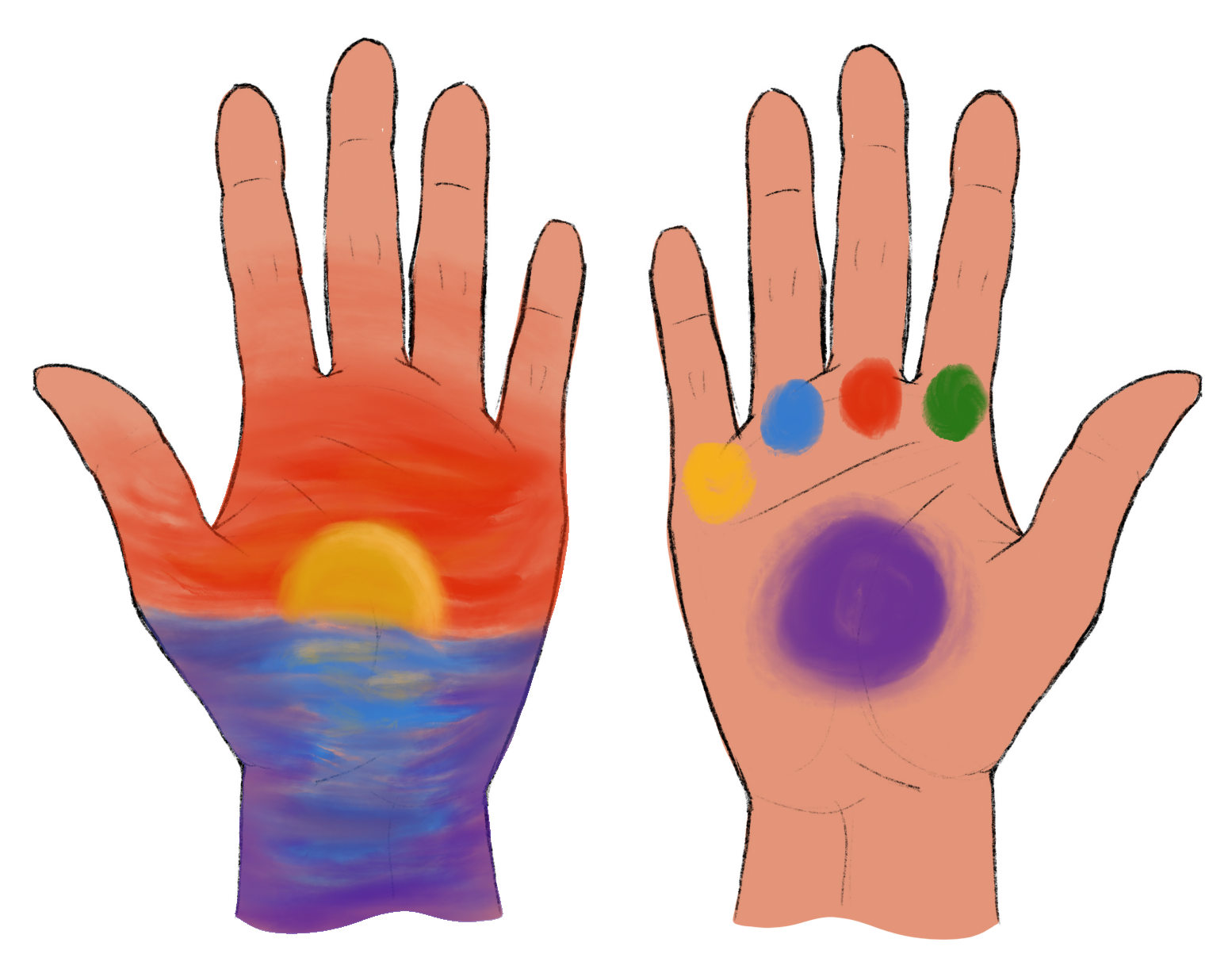

Using the two hands, one hand can be used as a canvas, while the other one is used as a palette. To use the palette, the person can simply bend the fingers of the corresponding colours to mix them. The fingers of the palette hand can then be use to paint on the canvas hand.

Embodied Playful Activities in Augmented Reality

To follow up on this work, we designed and implemented three embodied playful activities in Augmented Reality.

In "Dragon Food", each hand embodies a dragon. Opening and closing the hand results in opening and closing the mouth of the dragon. The green dragon only eats salty food, while the pink dragon only eats sweet food. Eat the maximum of food without getting hit by a bomb!

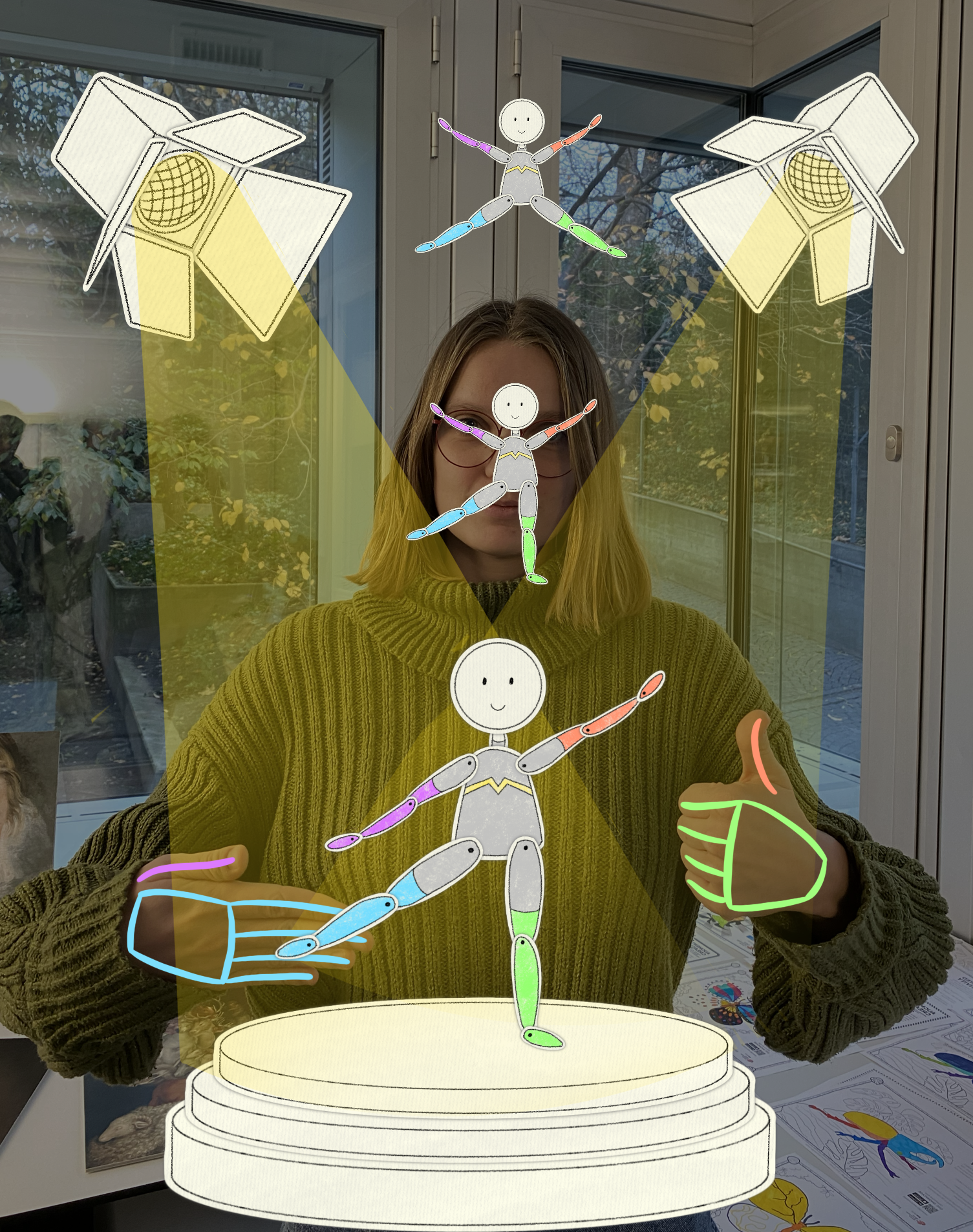

In "Puppet Dance", each hand controls part of the body of the puppet. The thumb activates the corresponding arm, while the other fingers control the leg. Follow the music and execute the choreography to become a star!

In "Ocean Clean-Up", trick the fisherman into cleaning the ocean. To do so, mimic scissors to cut the fishing hook if it caught a fish. Otherwise, mimic a camera to take a picture of a fish: the fish will pose for the picture and move slower. If the hook reaches the sea floor without catching a fish, it will grab trash instead.

These activities were designed and implemented as part of Martina Kessler's master thesis. The design was influenced by our own work on digital gloves, as well as hand interfaces [Pei 2022]. The pictures presented here are concept drafts and not final screenshots of the games.

Relevant References

[Pirker 2017] Johanna Pirker, Mathias Pojer, Andreas Holzinger, and Christian Gütl. 2017. Gesture-based interactions in video games with the leap motion controller. In International Conference on Human-Computer Interaction. Springer, 620–633.

[Piumsomboon 2013] Thammathip Piumsomboon, Adrian Clark, Mark Billinghurst, and Andy Cockburn. 2013. User-defined gestures for augmented reality. In IFIP Conference on Human-Computer Interaction. Springer, 282–299.

[Roo 2017] Joan Sol Roo, Renaud Gervais, Jeremy Frey, and Martin Hachet. 2017. Inner garden: Connecting inner states to a mixed reality sandbox for mindfulness. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems. 1459–1470.

[Gibbs 2005] Raymond W. Gibbs. 2005. Embodiment and cognitive science. Cambridge University Press.

[Nathan 2017] Mitchell J Nathan and Candace Walkington. 2017. Grounded and embodied mathematical cognition: Promoting mathematical insight and proof using action and language. Cognitive Research: Principles and Implications 2, 1 (2017), 9.

[Mueller 2018] Florian ’Floyd’ Mueller, Richard Byrne, Josh Andres, and Rakesh Patibanda. 2018. Experiencing the body as play. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems. 1–13.

[Patibanda 2017] Rakesh Patibanda, Florian 'Floyd' Mueller, Matevz Leskovsek, and Jonathan Duckworth. 2017. Life Tree: Understanding the design of breathing exercise games. In Proceedings of the Annual Symposium on Computer-Human Interaction in Play, pp. 19-31.

[Pei 2022] Siyou Pei, Alexander Chen, Jaewook Lee, and Yang Zhang. 2022. Hand Interfaces: Using Hands to Imitate Objects in AR/VR for Expressive Interactions. In CHI Conference on Human Factors in Computing Systems, pp. 1-16. 2022.

Team

- Julia Chatain - Noelle's Ark Implementation, System Design Study, Art work for the AR embodied playful activities, Supervision

- Danielle M. Sisserman - Marble Runner Implementation

- Lea Reichardt - Space Traveller Implementation, Preliminary User Study

- Martina Kessler - Dragon food, Puppet dance, and Ocean clean-up Implementation

- Violaine Fayolle - Graphic Design, Video

- Prof. Dr. Robert W. Sumner - Computer Science Supervision

- Prof. Dr. Manu Kapur - Learning Sciences Supervision

- Dr. Fabio Zünd - Supervision

- Dr. Amit Bermano - Supervision

Publication

Chatain, Julia, Danielle M. Sisserman, Lea Reichardt, Violaine Fayolle, Manu Kapur, Robert W. Sumner, Fabio Zünd, Amit H. Bermano. 2020. DigiGlo: Exploring the Palm as an Input and Display Mechanism through Digital Gloves. In Proceedings of the Annual Symposium on Computer-Human Interaction in Play (CHI PLAY ’20), November 2–4, 2020, Virtual Event, Canada. ACM, New York, NY, USA, 12 pages. (link) (pdf)